I have difficulties understanding the operation of gradient descent in neural networks.

Solved by Playground AI

The Problem

The problem lies in the fact that understanding the functions and concepts of gradient descent in neural networks poses a challenge. It is difficult to grasp the complex multi-layer neural networks and how their parameters function. Particularly, the role that weight changes and functions have on the operation of the neural network is unclear. Additionally, there is uncertainty about overfitting and interpreting distributions. In the face of these difficulties, playing with various available datasets or your own data could be helpful.

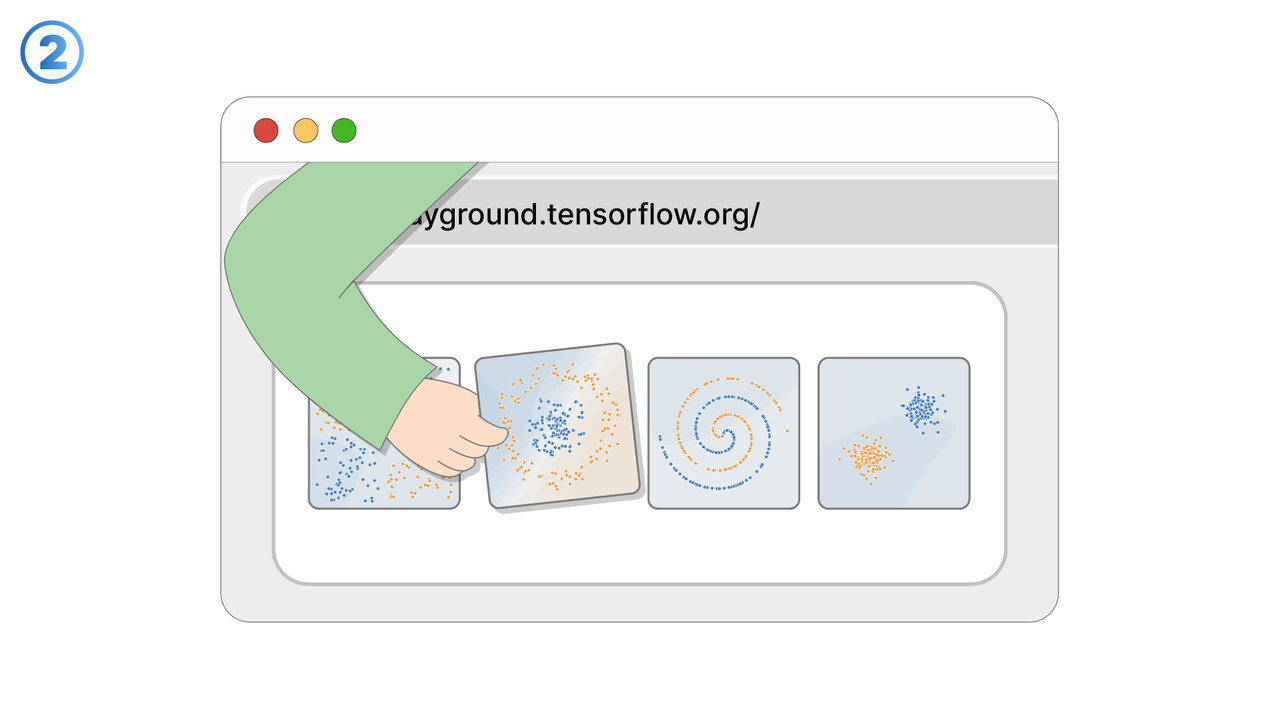

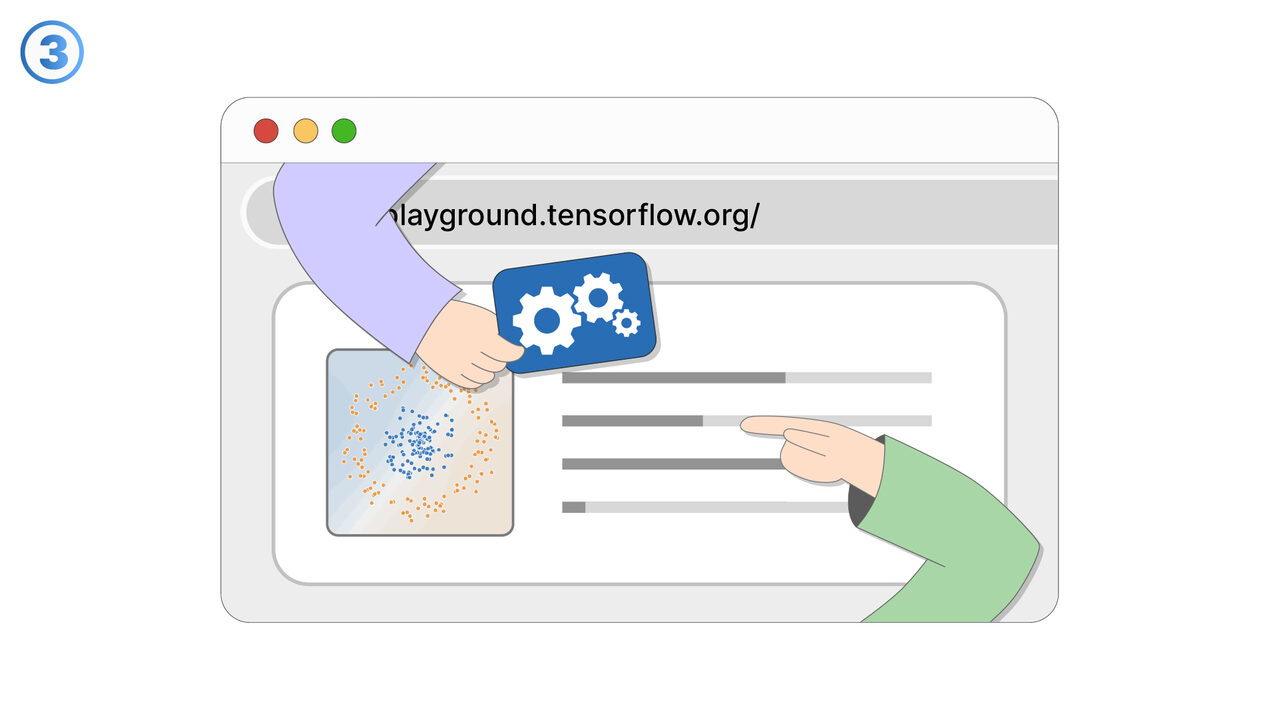

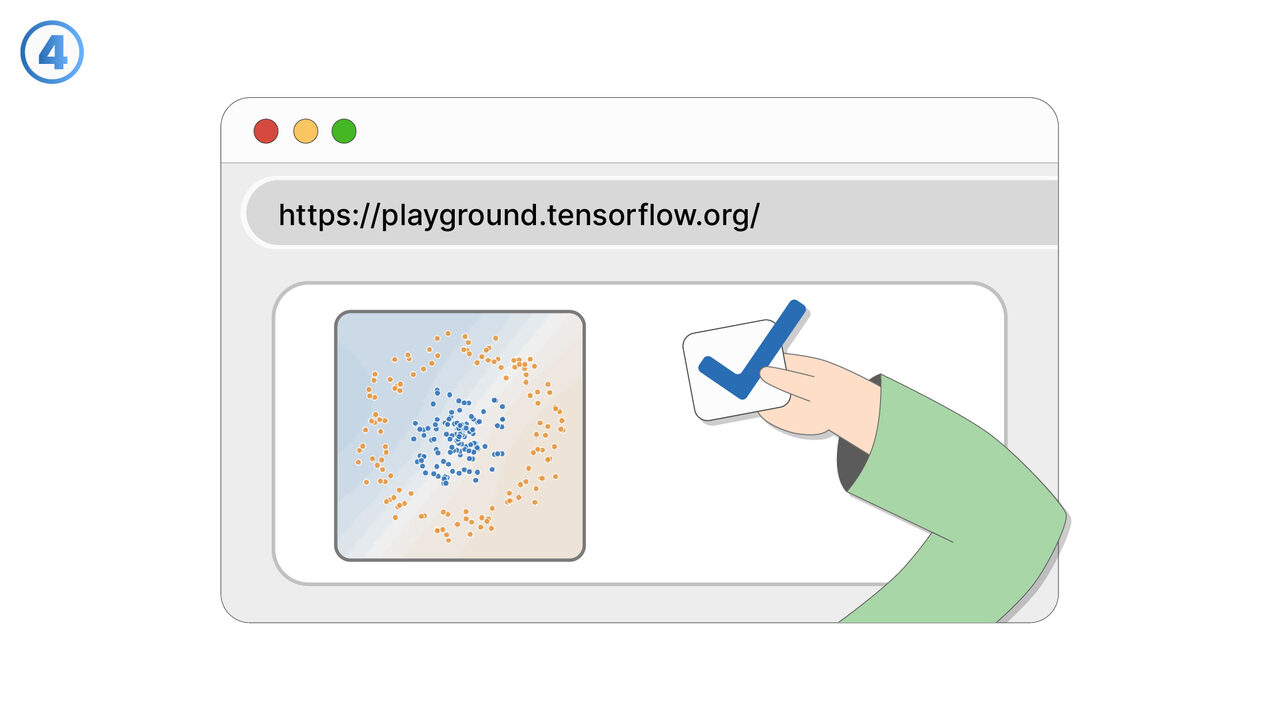

Screenshots

The Solution

Playground AI takes on the challenge of understanding neural networks and gradient descent by providing user-friendly and interactive visual representations. With this tool, users can change hyperparameters to see direct effects on network functions and thus better understand the impact of weight changes and function adjustments. Playground AI also provides a prediction feature that visualizes how changes within the network affect its operation. Through the opportunity to experiment with different data sets, or introduce their own data, users can learn and gain practical experience. Visualization of distributions also helps to understand their interpretation. In addition, the tool provides explanations and warnings about overfitting to better understand and avoid this phenomenon. This interactive and visual learning effectively promotes and improves the understanding of neural networks and gradient descent.

External Resource

https://playground.tensorflow.org/

Use this tool as a solution to the following problems

- I have difficulties understanding the complex concepts of neural networks and need a tool to improve my understanding.

- I have difficulties understanding the complex aspects of machine learning and neural networks.

- I am having problems identifying overfitting in the context of neural networks and need a tool for visualization and experimental understanding.

- I need an interactive tool to expand my understanding of neural networks and visualize the effects of changes to weights and functions.

- I am having trouble understanding neural networks and the interpretation of data sets.

- I need an interactive tool that helps me better understand neural networks and machine learning concepts, and experiment with various datasets.

- I'm having trouble adapting neural networks for specific tasks.

- I am looking for a tool that helps me to better understand neural networks through visual learning.

- I need an interactive tool to deepen my knowledge in neural networks and explore various aspects of machine learning.

Know a better solution? Let us know.

If you know of a tool or approach that could help people solve a problem we haven't covered yet, we'd love to hear about it.